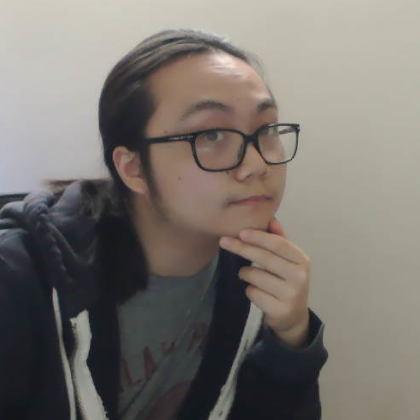

shuhongchen

shuhong (shu) chen

陈舒鸿

site navigation

home

research publications

experience

top anime

artwork

info

email: shu[a]dondontech.com

web: shuhongchen.github.io

github: ShuhongChen

myanimelist: shuchen

linkedin: link

cv: latest

academic

google scholar: TcGJKGwAAAAJ

orcid: 0000-0001-7317-0068

erdős number: ≤4

back to home

rants

I think rants should be short; short rants are hard.

Possibly more rants to come…

AI in my art

Moved to the artwork page.

graphics is the future of computer vision

2021-08

I believe that to truly “understand” data, we must analyze its generative process. For images, generation is a well-studied physical process: light bounces around and enters a camera. We are even able to accurately simulate image synthesis through computer graphics. However, the most powerful image understanding models today work at the pixel-level, without 3D representations or light transport.

I think this is only a matter of scalability, a lack of data and infrastructure. The scale of available high-quality real-world 3D data is still magnitudes behind that of images; similarly, the hardware and software to collect and process 3D datatypes has yet to be widely democratized. With the advancing commercialization of depth imaging and AR/VR, graphics may soon become an integral part of image understanding.